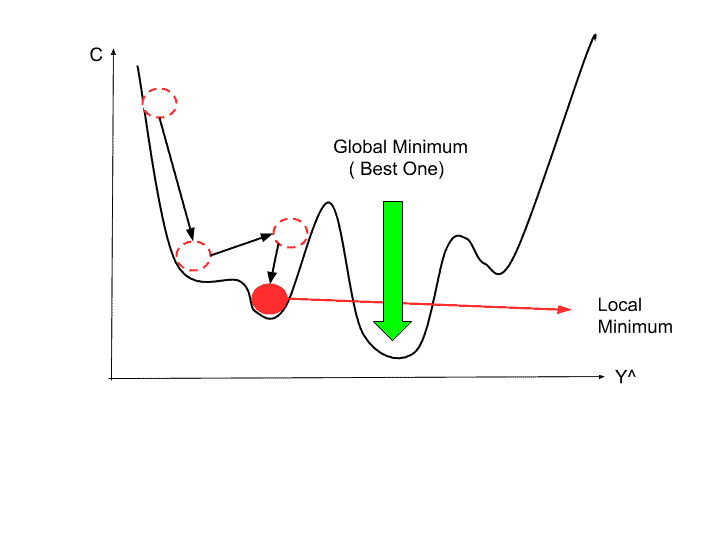

The “learning rate” mentioned above is a flexible parameter which heavily influences the convergence of the algorithm. Repeat steps 3 to 5 until gradient is almost 0.Calculate the new parameters as : new params = old params - step size.Calculate the step sizes for each feature as : step size = gradient * learning rate.Update the gradient function by plugging in the parameter values.If we had more features like x1, x2 etc., we take the partial derivative of “y” with respect to each of the features.) (To clarify, in the parabola example, differentiate “y” with respect to “x”. Pick a random initial value for the parameters.In other words, compute the gradient of the function. Find the slope of the objective function with respect to each parameter/feature.The general idea is to start with a random point (in our parabola example start with a random “x”) and find a way to update this point with each iteration such that we descend the slope. In the case of linear regression, you can mentally map the sum of squared residuals as the function “y” and the weight vector as “x” in the parabola above. This algorithm is useful in cases where the optimal points cannot be found by equating the slope of the function to 0. “Gradient descent is an iterative algorithm, that starts from a random point on a function and travels down its slope in steps until it reaches the lowest point of that function.”

The same problem can be solved by gradient descent technique. By using this technique, we solved the linear regression problem and learnt the weight vector. We know that a function reaches its minimum value when the slope is equal to 0. The objective of regression, as we recall from this article, is to minimize the sum of squared residuals. I use linear regression problem to explain gradient descent algorithm. It is important to understand the above before proceeding further. “y” here is termed as the objective function that the gradient descent algorithm operates upon, to descend to the lowest point. The objective of gradient descent algorithm is to find the value of “x” such that “y” is minimum. It should also be noted that the sensitivities from TSUNAMI will have a larger relative uncertainty per pixel due to the finer spacial discretization.In the above graph, the lowest point on the parabola occurs at x = 1. The finer grid also gives ADAM more geometric freedom to form a better-resolved solution. This problem aims to show that when we expand the number of variables within the system, the ADAM algorithm can still converge. This is the only change to the objective function and derivatives used in the first challenge problem, where 61 is replaced with 976. Previously, this group published the demonstration of gradient descent for the design optimization of neutronic systems with quantitative constraints using the interior point method to optimize the k-eigenvalue, \(k_=976\). This algorithm utilizes the derivatives of an objective function to iteratively take steps toward the minimum of the objective function and is designed to work well with a stochastic gradient, such as those estimated by Monte Carlo neutron transport simulations. The autonomous design algorithm used in this work is a stochastic gradient descent. Nuclear engineering has little documentation on the use of these methods within the field 9, 10, 11, 12. Gradient descent 4, 5 has been shown to be an effective tool for engineering problems 6, 7, 8. For example, an AI system chose to add a moderator material within a region that was intended to be a “fast spectrum” section for shaping a neutron energy spectrum of the Fast Neutron Source 3 at the University of Tennessee, Knoxville. Automated design of nuclear systems can also help break away from traditional human biases that are often present. These automated tools can help explore vast design spaces where the optimal case is neither intuitive nor easy to find due to an intractable number of potential solutions. Nuclear engineering stands to gain from these powerful optimization tools. Autonomous algorithmic-based design is becoming a powerful optimization tool in many engineering fields 1, 2.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed